Local-first · No login · Works with any agent

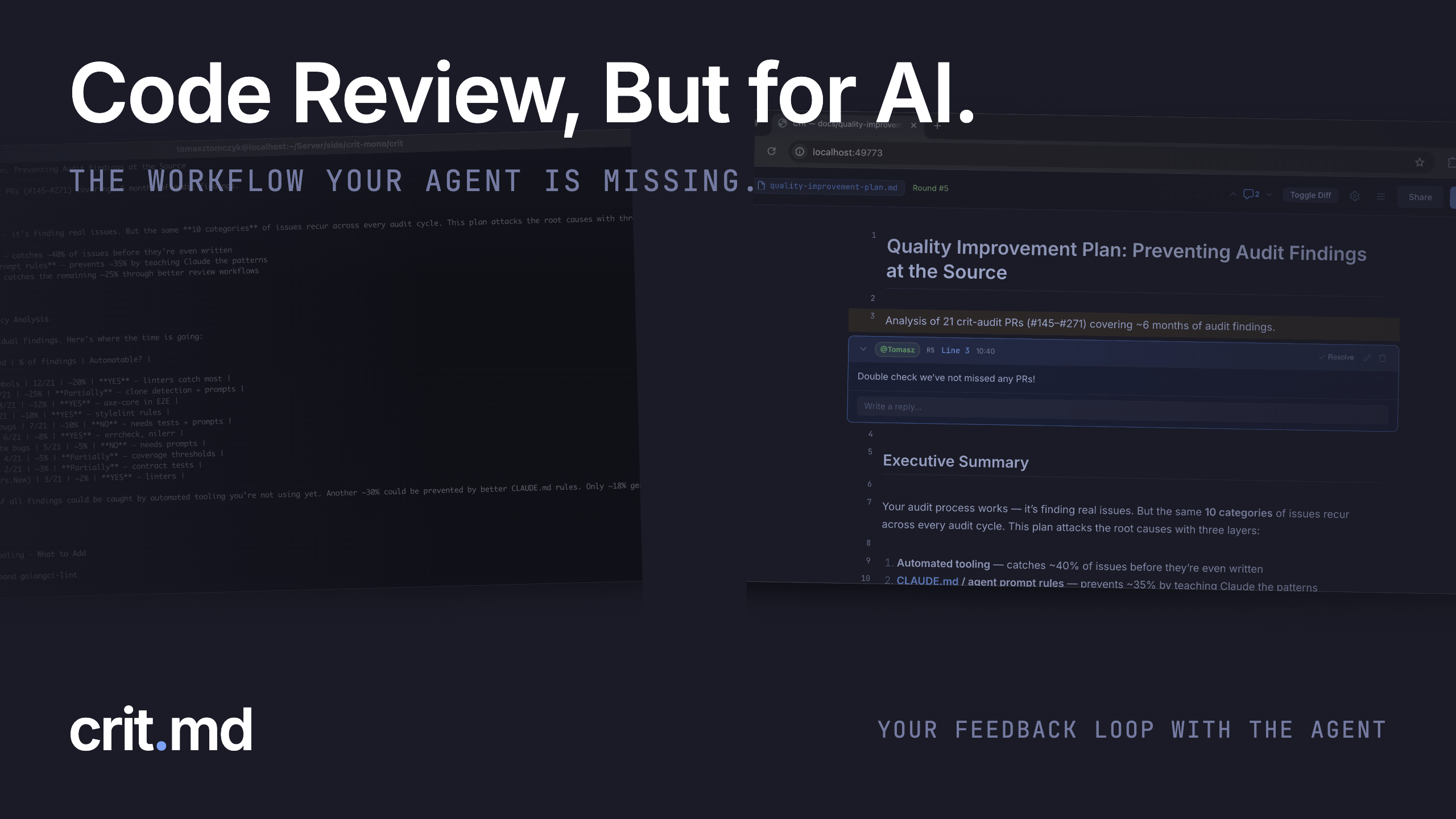

Your feedback loop with the agent. ▍

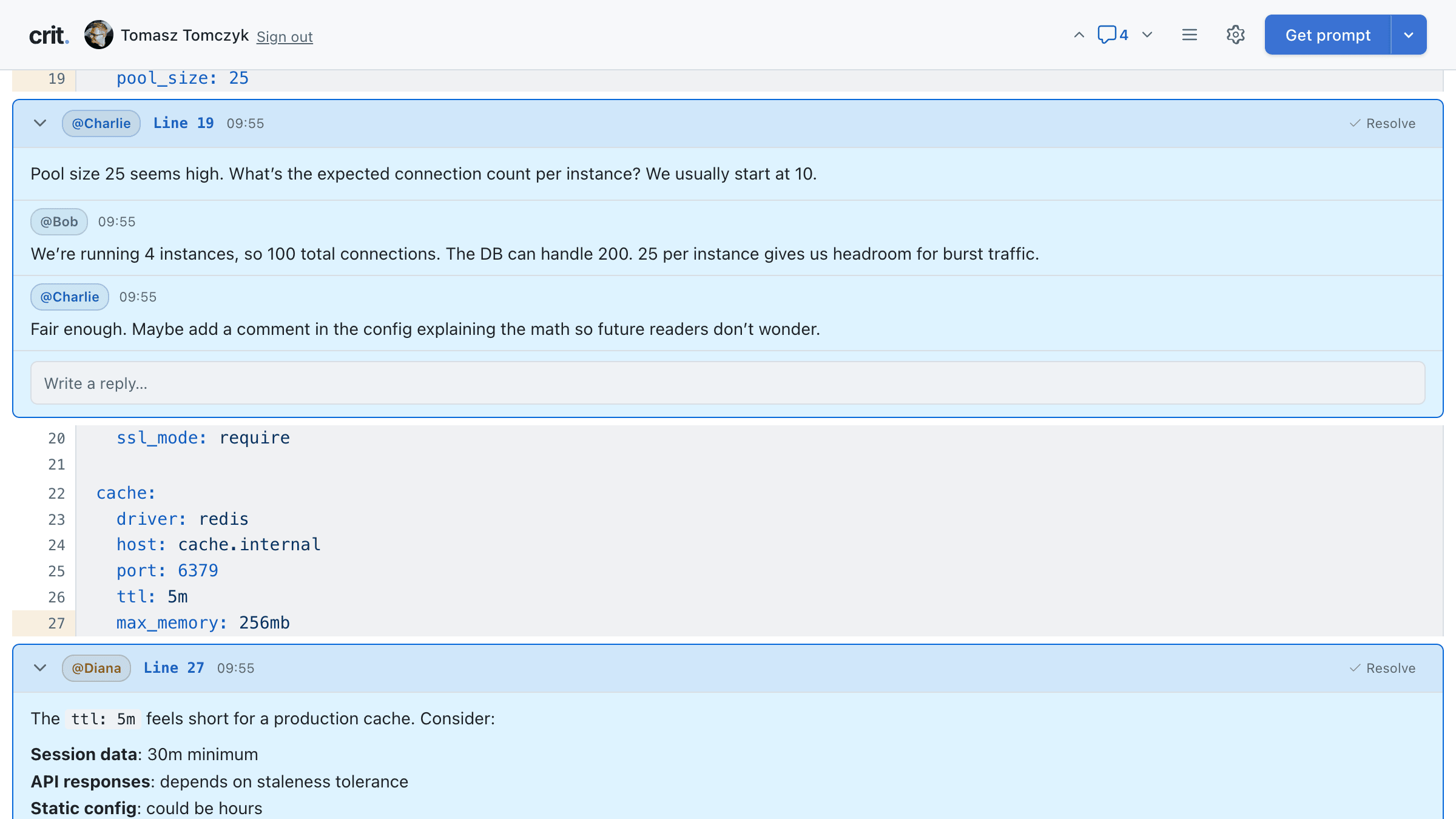

Review plans before your agent writes code. Review code before you ship it. Leave comments, click Finish Review, and your agent acts on the feedback automatically. Repeat as needed. Local by default.

Install

Single binary. Reviews stay on your machine - sharing is opt-in.

$ brew install tomasz-tomczyk/tap/crit

$ crit or crit plan.md

Or download a pre-built binary from GitHub Releases.

Connect your agent

Add skills to teach your agent how to use Crit.

The core loop

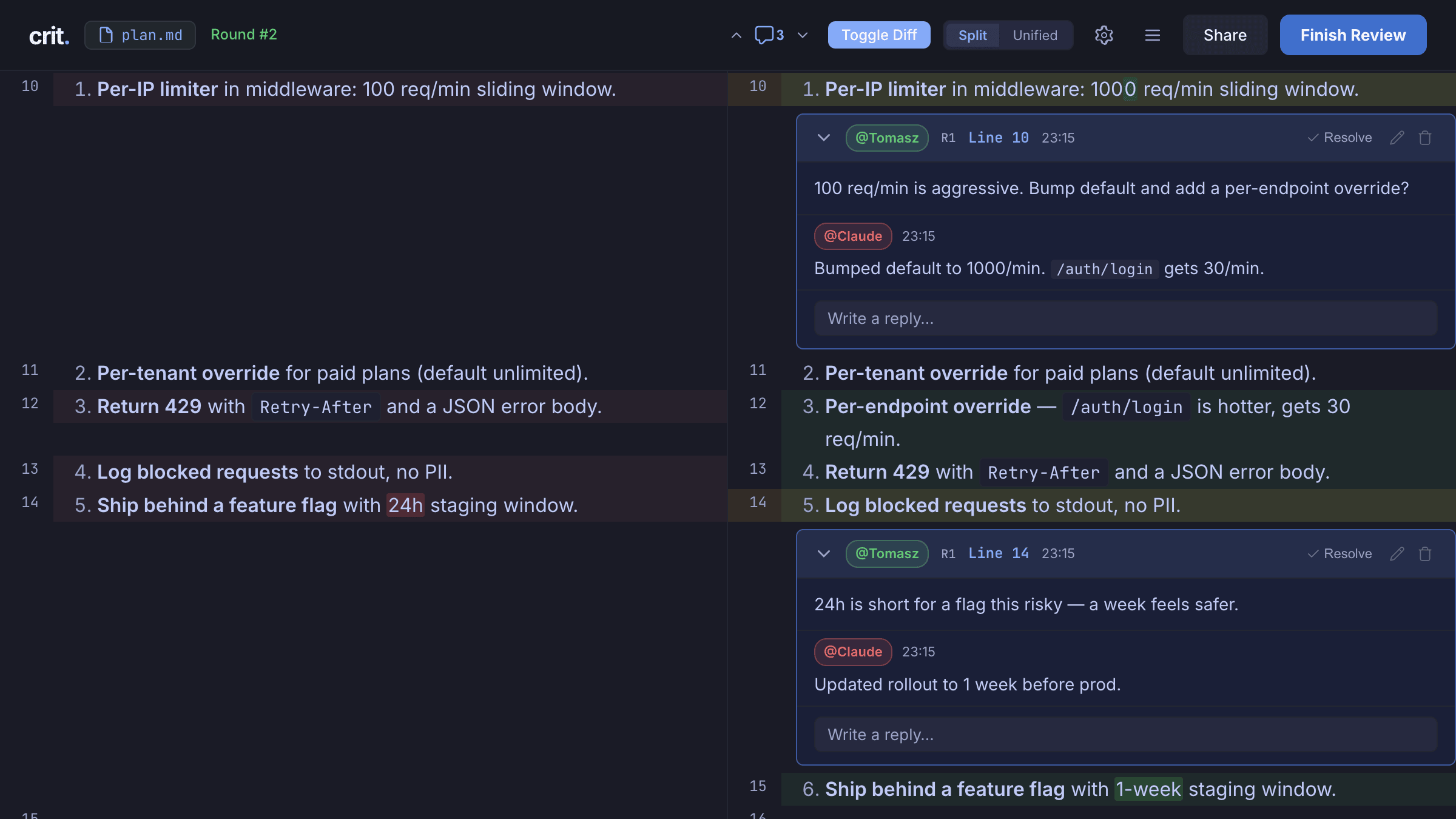

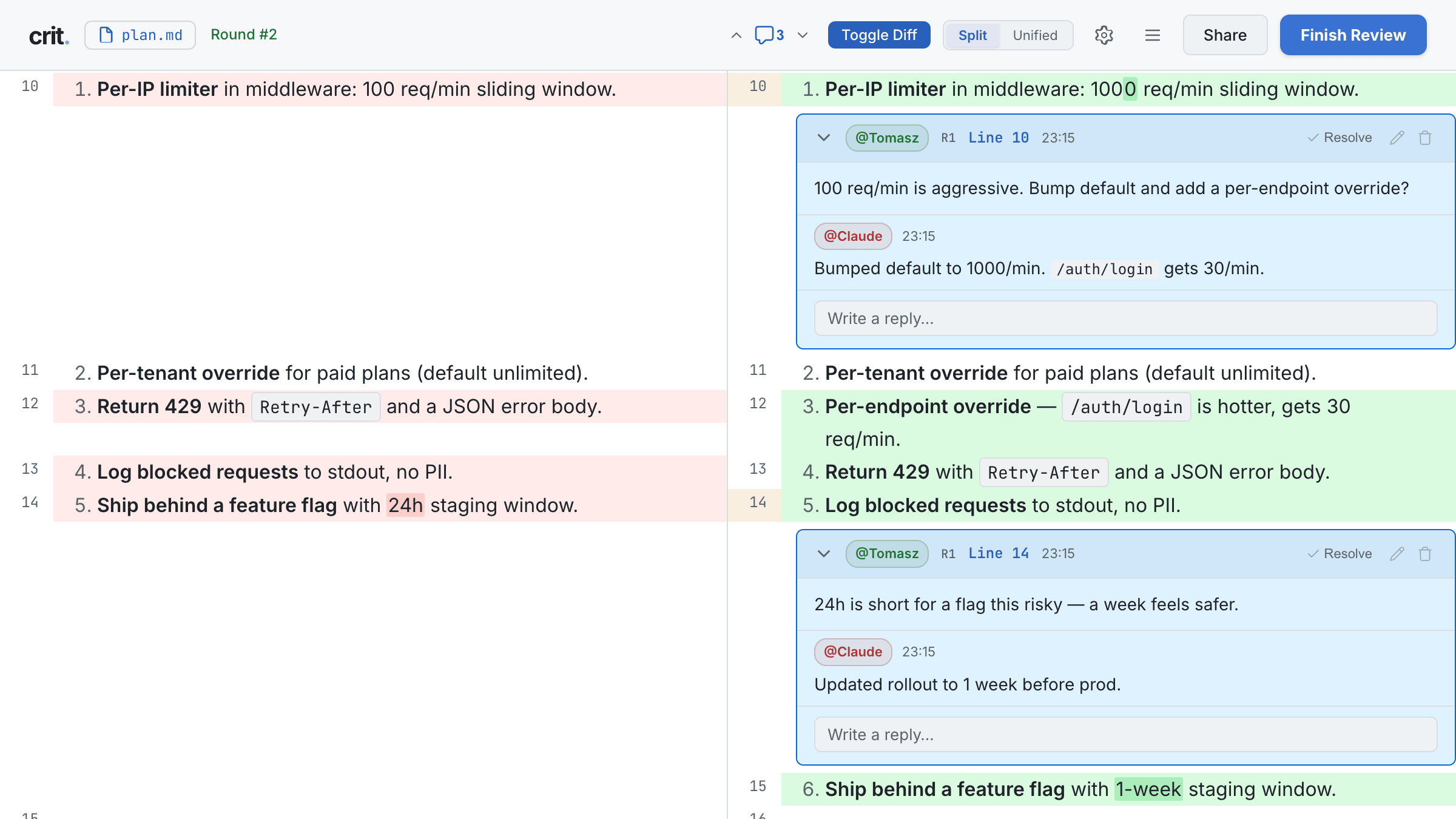

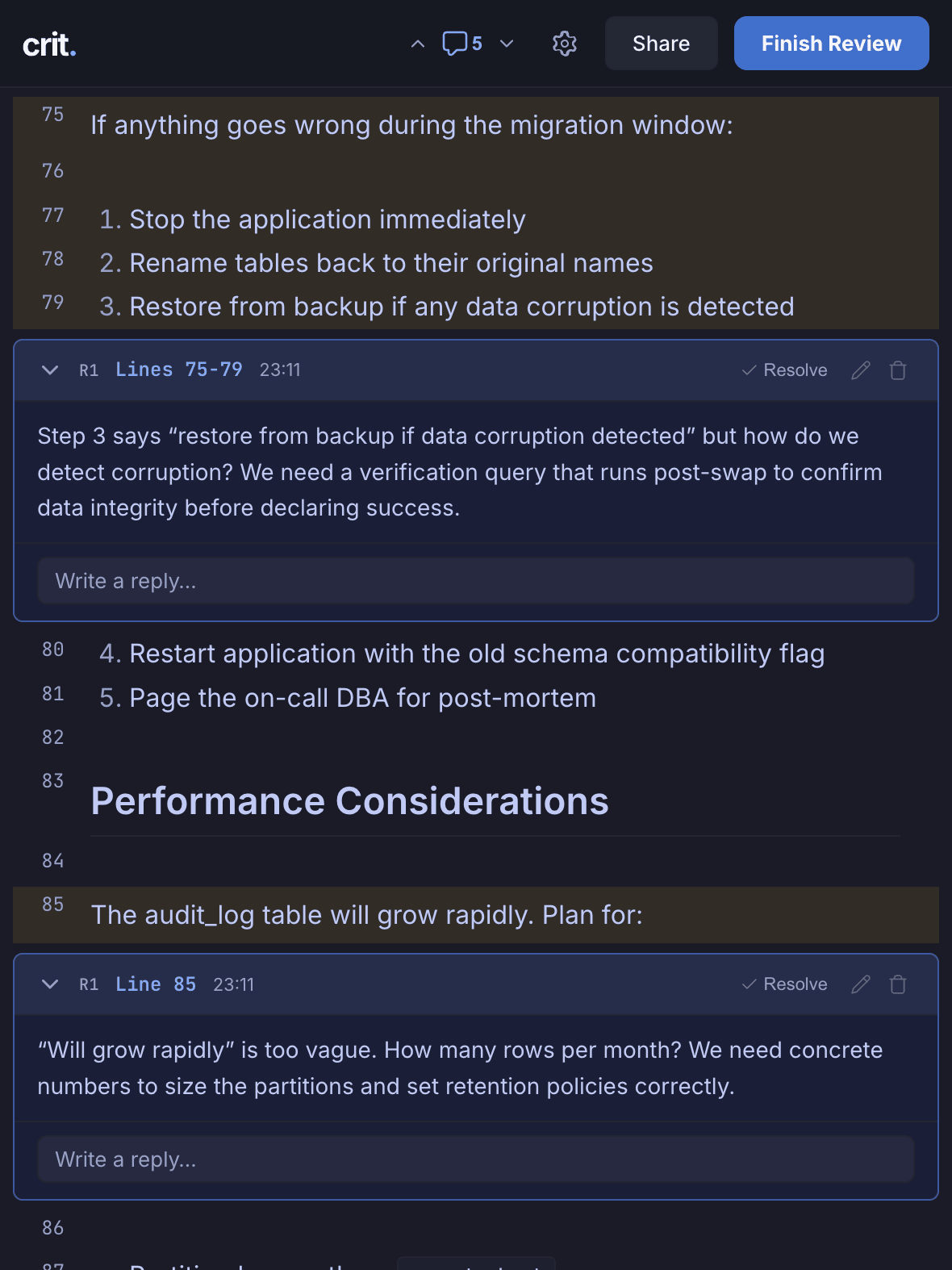

Comment in. Agent updates. Diff out.

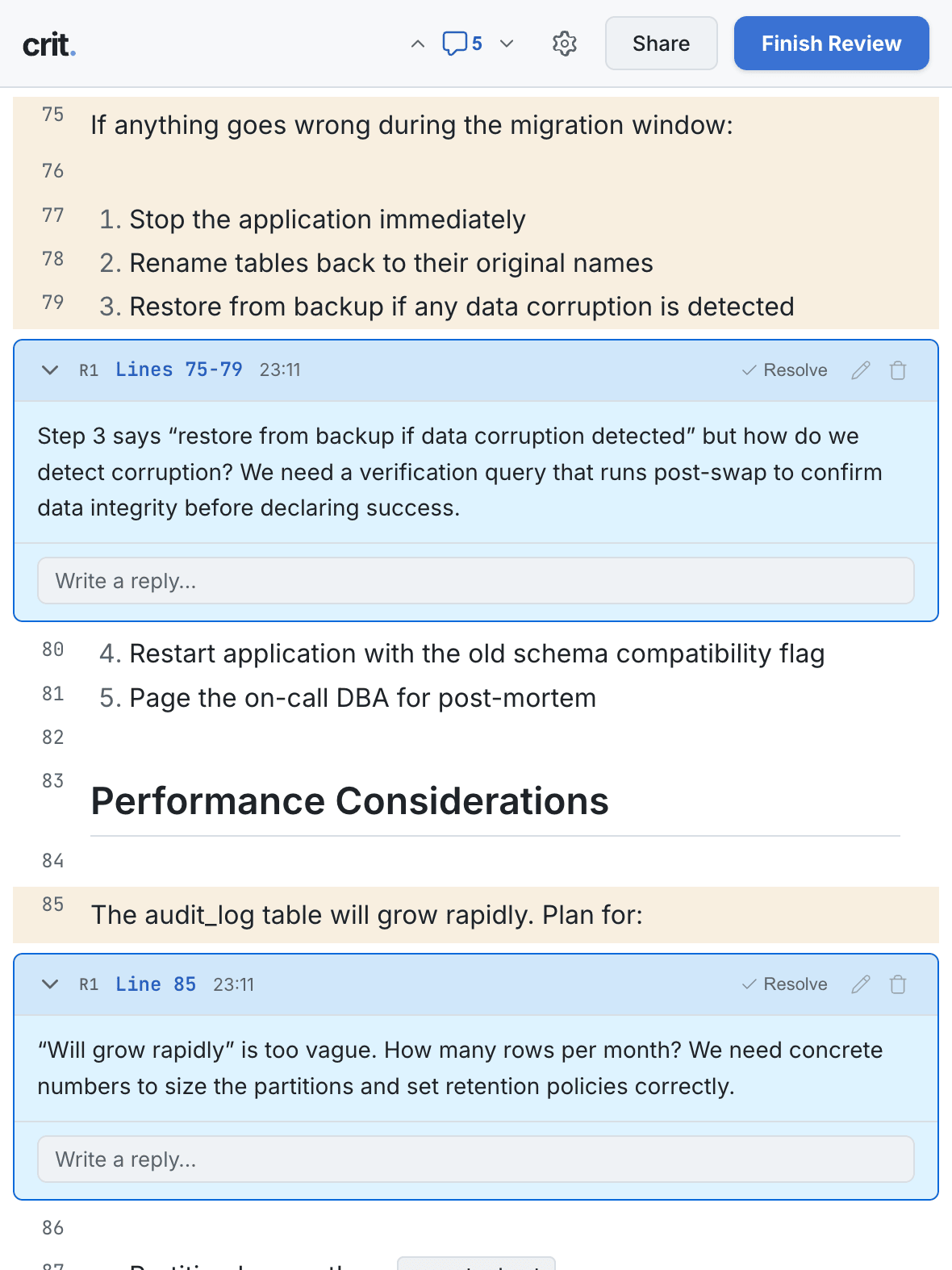

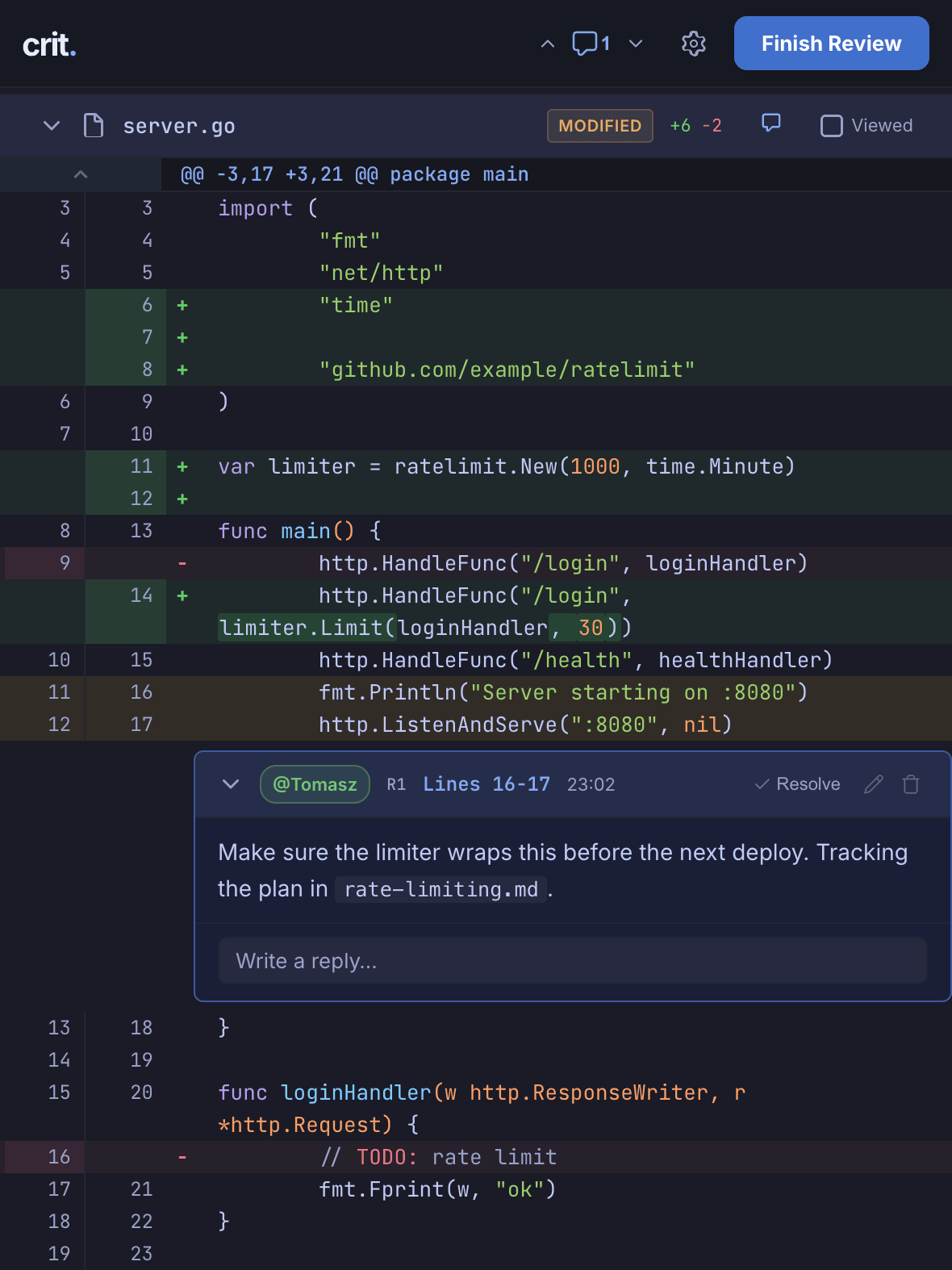

Drag across a range of lines, leave comments, hit Finish Review. Your agent picks up the feedback, edits the file, and Crit reloads with a diff so you can keep iterating without losing context. Previous comments stay visible - resolve only when you're satisfied.

Works great for plans and code.

Beautifully rendered markdown. Source files render with syntax highlighting. Same review UI for both - you don't switch tools mid-feature.

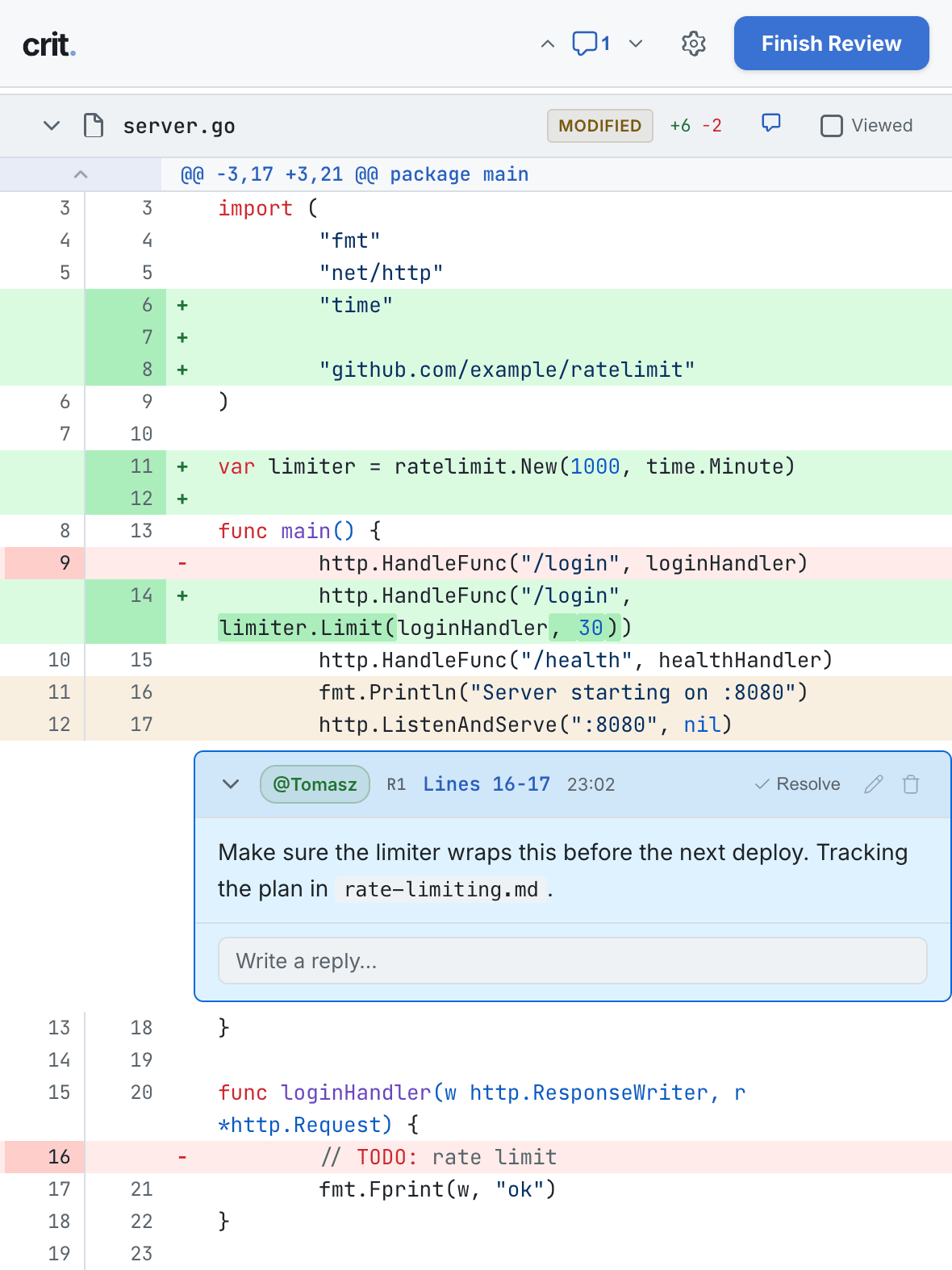

Share reviews for a second opinion.

One click generates a public link — no install, no login on the other end. When finished, continue locally with your agent. Read more →

Works with every agent

Claude Code, Cursor, Copilot, Aider, Cline, Windsurf, or anything that reads files. One command sets up the skill so it can launch Crit and listen for feedback.

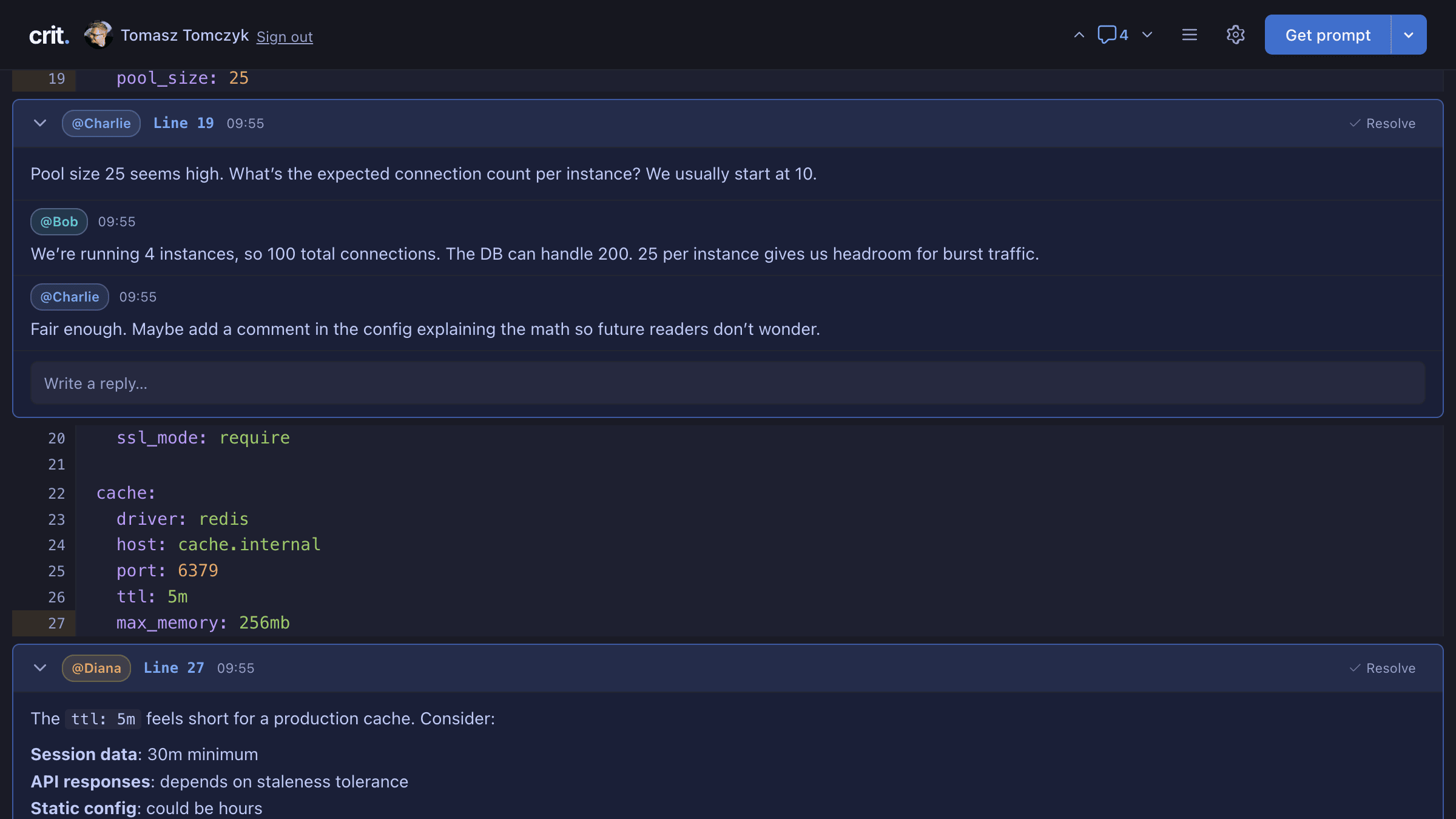

Browse integrations →Multi-round & concurrent

Iterate over multiple rounds of feedback per file. Run several reviews in parallel without them stepping on each other — useful when you're juggling a few agents at once.

Local-first, optionally shared

Reviews live on your machine until you choose to share. Skip sharing entirely or self-host the share target on your own infra. No account required.

Self-host crit-web →Plus the small stuff that adds up

- Vim keybindings

- Git diffs (split & unified)

- Round-over-round diffs

- Syntax highlighting (190+ langs)

- Mermaid diagrams

- Table of contents

- Dark & light themes

- Draft autosave

- Insert suggestions

- Single binary install

Built by people who review a lot of AI output.

Hey, I'm Tomasz!

I've been building software for over 25 years. AI changed what it means to write code, but reviewing the output is harder than writing it yourself - agents produce plausible-looking diffs faster than you can read them carefully.

I built Crit to make careful review the easy path again. It now improves every week from the feedback of engineers who live in this loop.

A clean local UI to batch my feedback and iterate.

Crit saves me so much time reviewing Claude Code plans - instead of fumbling with line numbers or accidental sends, I get a clean local UI to batch my feedback and iterate, all without leaving my workflow.

Omer

Principal Engineer

Review and iterate on plans, down to specific sections.

I use Crit daily to tighten feedback loops with my coding agent. Being able to review and iterate on plans, down to specific sections, or entire spec folders has made AI-assisted development feel fast and controlled.

The integration into my Claude Code setup is seamless and just clicks.

Ullrich Schäfer

Engineering Manager @ Pitch

It's like a pull request review but for your plan.

I've been using crit to review plans for some times. I use claude code in the command line without an IDE, so being to quickly check the plan with rendering is super nice.

The system allowing you to add comments is the killer feature: it's like a pull request review but for your plan.

On long, complex plans I used to ask Claude things like "on point 3., we should do X, drop point 7., ...". Using comments makes it more straightforward and easy to review later.

Vincent

Senior Software Engineer

Genuinely game changing for agentic workflows.

Crit feels genuinely game changing for agentic workflows. It just works.

It fits into my setup with basically zero friction, and makes reviewing plans feel fast, natural, and way less annoying than pushing code to GitHub just to leave feedback. It's so easy to use I use it to give feedback to my agents every iteration of the process.

The collaborative features are still a little early, but they're already kind of amazing. This is the tool that makes me surprised that the major AI labs aren't building this into their harnesses themselves.

@vereisyaps

Tech Lead

Shared to crit.md

So far, 129 shared reviews with 400 inline comments across 78,406 lines of code — roughly 4.0 MB of plans, diffs, and prose.